Idiocy for All and the Rise of International Large Scale Educational Assessments

Sign up

Sign up for the Worlds of Education newsletter.

Sign up

Sign up for the Worlds of Education newsletter.

Thank you for subscribing

Something went wrong

Almost any education-related topic seems to turn into an overheated debate, provoking very strong gut reactions and diminishing any hope for productive discussions that engage in careful analysis of contrasting perspectives and forms of evidence. This is certainly the case with International Large Scale Educational Assessments (ILSEAs), like PISA or TIMSS, which lack nuanced discussions and methodic analyses of their role in improving student achievement.

We should not be surprised by the polarization of such debates. Politicians, researchers, teachers, administrators, students, and their families have very strong opinions and perspectives about what works in education, what needs to be fixed, and what the “fix” should be. Each of these stakeholders attacks the other using several arguments, but two of the most common are “You are an idiot; everybody agrees with my idea, which is just good common sense” coupled with a dismissive comment, “your idea lacks any evidence, and even if you have some, it is not as strong as mine.” In fact, it seems that when discussing education, the tendency to be idiotic is quite common, and in many cases proudly so. By “idiotic,” we are not referring to the common usage of somebody who is not very clever, but in the original meaning of the word in Ancient Greek, as Walter C. Parker (2005) explains:

…idiocy shares with idiom and idiosyncratic the root idios, which means private, separate, self-centered – selfish. “Idiotic” was in the Greek context a term of reproach. When a person’s behavior became idiotic – concerned myopically with private things and unmindful of common things – then the person was believed to be like a rudderless ship, without consequence save for the danger it posed to others. This meaning of idiocy achieves its force when contrasted with politës (citizen) or public. Here we have a powerful opposition: the private individual versus the public citizen (p. 344).

To understand the extent to which educational stakeholders are exhibiting idiotic attitudes towards ILSEAs, and education reforms more broadly, one would first need to examine the discourse around, and reactions to, ILSEAs and their results. Research on ILSEAs has primarily focused on student performance and disparities in outcomes by gender and socioeconomic status, with more limited research on stakeholder attitudes . Our research sought to fill this gap by looking specifically at whether national-level educational stakeholders (e.g., ministries of education, national policymakers, other national political and social actors) value these types of international measures of student attainment and to what extent they have integrated ILSEAs into their work at the national level.

ILSEAs as Tools of Legitimation

Our exploratory review of the ILSEA literature found that policymakers appear to be using these assessments as tools to legitimate existing or new educational reforms, although there is little evidence of any positive or negative causal relationship between ILSEA participation and reform implementation. That is, educational reform efforts have often already been proposed or underway, and policymakers use ILSEA results as they become available to argue for or against new or existing legislation.

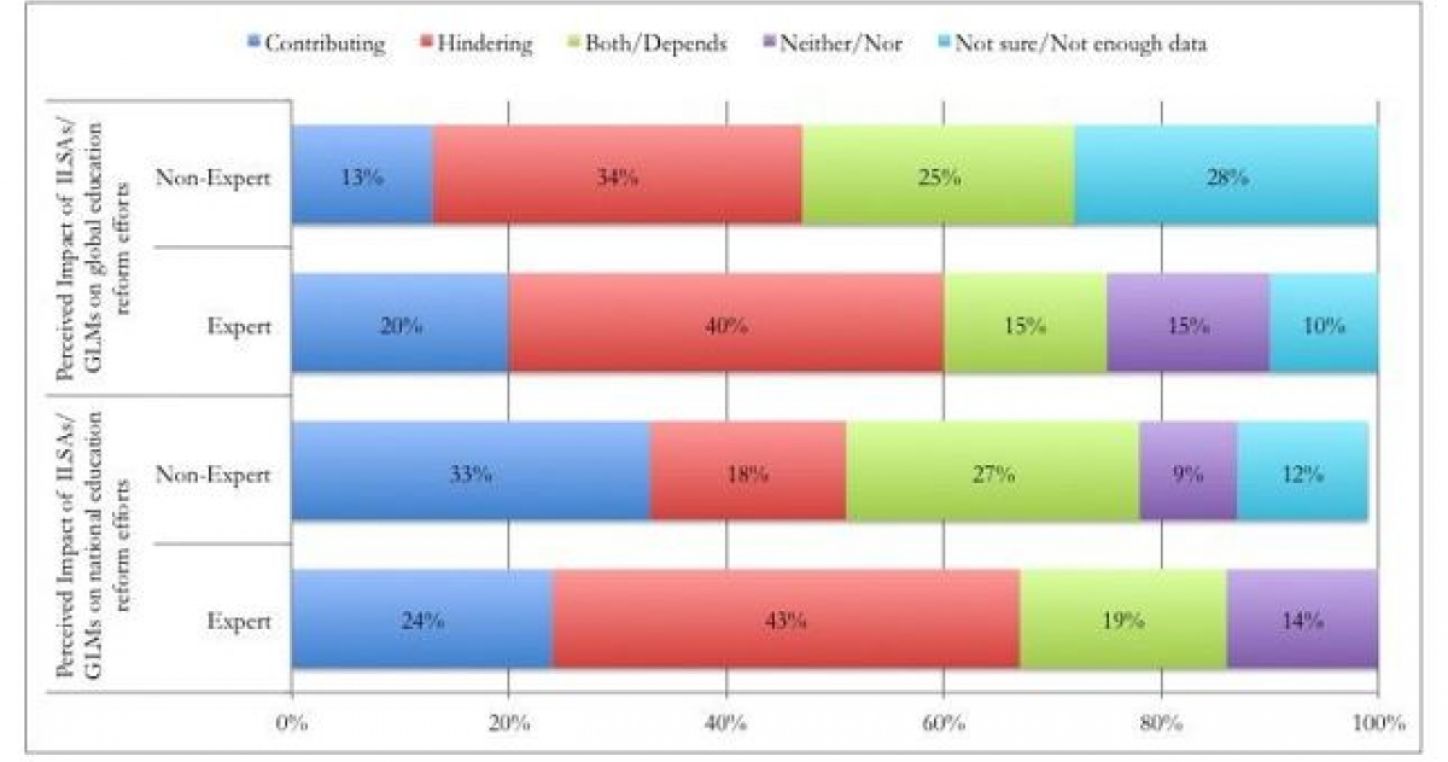

At the same time, results from our two surveys of ILSEA experts, policymakers, and educators pointed to a growing perception among respondents that ILSEAs are having an effect on national educational policies, with 38% of all survey respondents stating that ILSEAs were often misused in national policy contexts. Interestingly, experts are generally more critical in their assessment of ILSEAs compared to non-experts, with 43% arguing that ILSEAs are often being misused. They explain that policymakers have little understanding of ILSEAs and use them for “ceremonial effects,” while at the same time arguing that these assessments are too broad and decontextualized to be used meaningfully in national contexts. Based on their professional and personal experiences, respondents were divided over whether ILSEAs actually contribute or hinder national education reform efforts.

Survey respondents’ perceptions of the contribution and/or hindrance of ILSAs/GLMs to global reform efforts

Note: Includes responses from both the expert and non-expert surveys.

Perhaps the most significant finding associated with the use of ILSEAs in the literature we reviewed is the way in which new conditions for educational comparison have been made possible at the national, regional, and global levels. On the one hand, ILSEAs have the potential to provide governments and education stakeholders with useful and relevant modes of comparison that purportedly allow for the assessment of educational achievement both within cities, states, and regions, and between countries. On the other hand, using idiotic lenses to analyze ILSEAs’ results – the good, the bad, and the ugly – without considering the strong influence of unequal educational opportunities in various contexts or acknowledging broader political or economic agendas driving the production and use of ILSEAs in education– is dangerous.

Generating oversimplified narratives using ILSEAs, disregarding the different contexts and multiple obstacles, showing a lack of concern for the educational opportunities and rights of millions of children, and focusing all the energies on justifying your own opinions - while quickly discarding any counterevidence to legitimate your interests and benefits - is a genuine form of educational idiocy. The best defense against educational idiocy? We already have discovered it, discussed it, experimented with it, assessed it, and considered the evidence: avoid the exclusive reliance on simplistic quantitative measures in determining education outcomes, shift attention away from short-term strategies designed to quickly climb the ILSEA rankings, implement proven strategies to reduce inequalities in opportunities to improve long term outcomes. Above all, stop pushing for education reforms based on a single, narrow yardstick of quality.

More than ever, we need to consider multiple types and sources of data, we need to explore more meaningful ways of reporting comparative data, we need to recognize the importance of the civic and public purposes of education, and we need to involve our diverse communities - parents, teachers, education unions, administrators, community leaders, and students - in a public dialogue about what education is and ought to be about. Overcoming education idiocy would thus entail a return to the larger and more important educational questions than how a country performs on international large-scale assessments.

The opinions expressed in this blog are those of the author and do not necessarily reflect any official policies or positions of Education International.